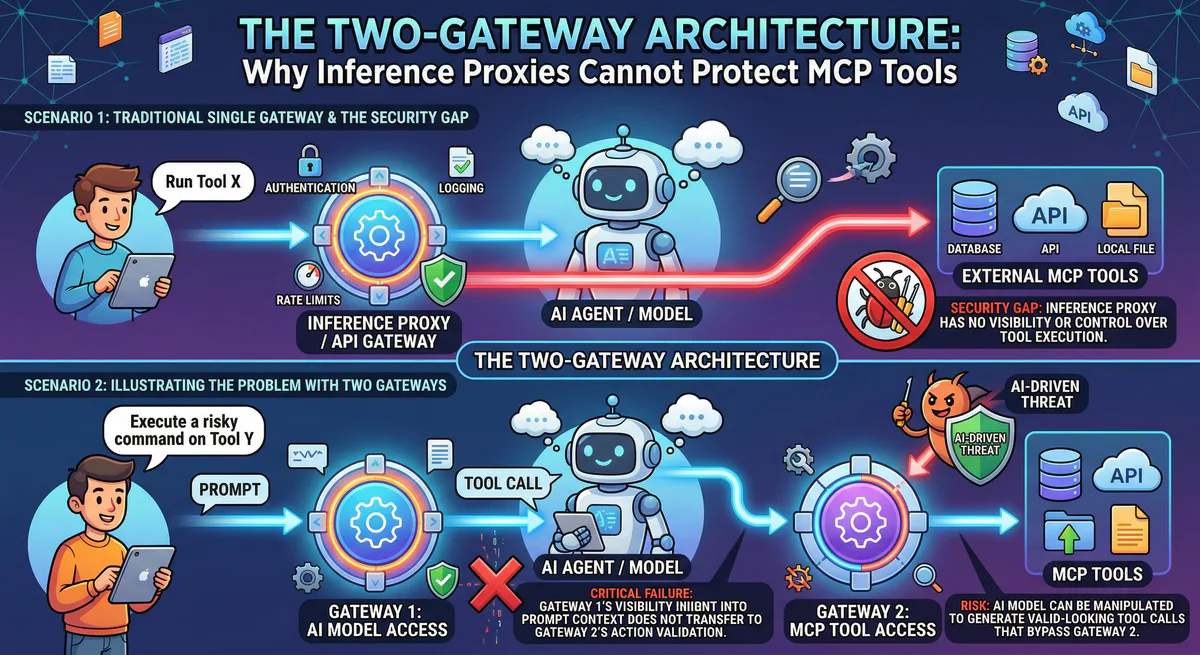

The sudden, sweeping standardization of the Model Context Protocol (MCP) in 2025 triggered a hidden infrastructure crisis. By 2026, enterprise architecture teams realized that an AI Agent is fundamentally split into two distinct operational vectors: the “Brain” and the “Hands”.

Attempting to govern both vectors with a single piece of infrastructure has been a catastrophic failure. Senior architectural circles have crystallized a new paradigm to solve this: The Two-Gateway Architecture.

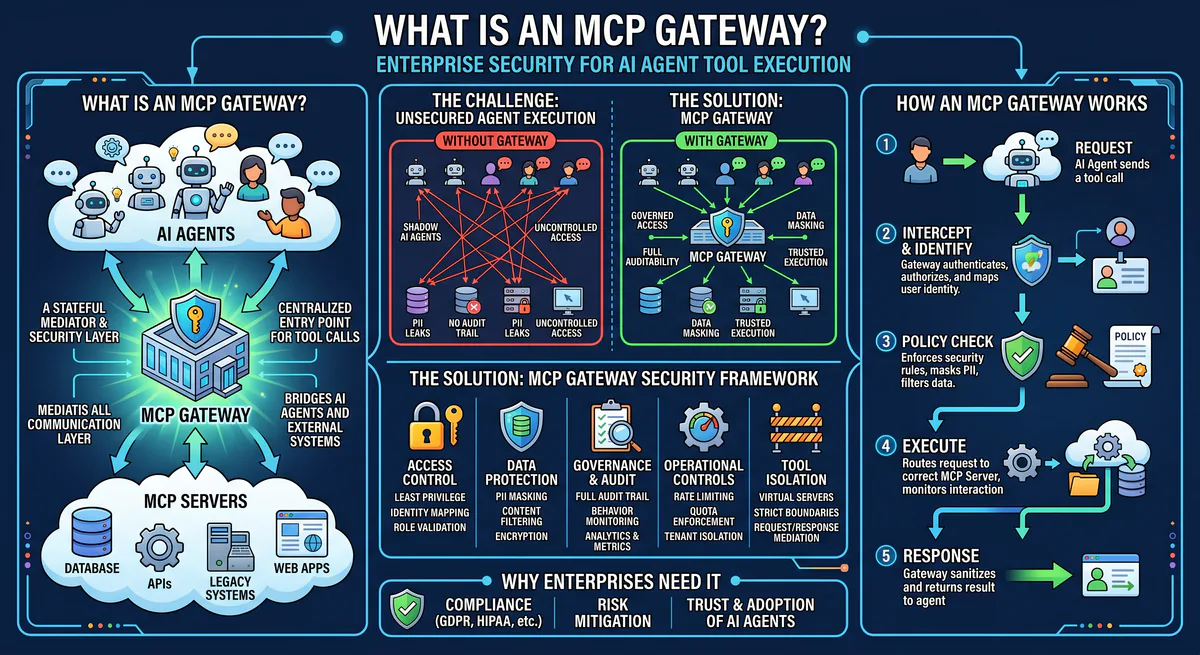

To secure an autonomous agent, you must decouple the infrastructure securing its intelligence from the infrastructure securing its actions. If you attempt to force stateful, bidirectional JSON-RPC tool traffic through an Inference Gateway, or force LLM prompt caching through an Execution Gateway, your architecture is structurally compromised.

This deep dive exposes why the legacy approach of routing everything through standard AWS API Gateways fails, precisely defines the modern split between Inference Gateways (like Cloudflare AI Gateway) and Execution Gateways (like Vinkius Edge), and dissects the absolute technological superiority of Vinkius for MCP capability governance.

1. The Brain vs. The Hands

An enterprise AI Agent requires two network paths:

- The Inference Path (The Brain): The Agent sends a prompt to an LLM (e.g., Anthropic, OpenAI) to ask, “What should I do next?” This traffic is largely stateless HTTP REST (

/v1/chat/completions). It handles massive token payloads and requires caching, load balancing, and model routing. - The Execution Path (The Hands): The Agent has decided on an action. It uses MCP (via Streamable HTTP or SSE) to tell your backend, “Drop the users table”. This traffic is stateful JSON-RPC 2.0. It requires strict RBAC, data redaction (DLP), and sandboxed compute environments.

A gateway built for the Brain is entirely blind to the Hands.

2. Competitive Clarity: Defining 2026 Gateways

When evaluating infrastructure for Agents, engineering teams must deploy the right proxy for the right vector.

Cloudflare AI Gateway: The King of Inference

Cloudflare AI Gateway is undoubtedly the 2026 industry standard for Inference Observability. It sits exclusively on the Inference Path. If your Agent needs to route a prompt to OpenAI, fallback to Anthropic if OpenAI is down, or cache a semantic query to save money, Cloudflare is the tool you use.

The Misunderstanding: The industry consistently makes the amateur mistake of assuming Cloudflare AI Gateway evaluates MCP traffic. It does not. Cloudflare AI Gateway sits in front of the LLM, not in front of your internal infrastructure. If your Agent sends a tools/call JSON-RPC payload to your database via MCP, Cloudflare views it as generic transparent bytes. It cannot parse the custom JSON-RPC schema to enforce tool execution validation.

AWS API Gateway & Bedrock AgentCore: The Legacy Compromise

AWS attempts to handle both the Brain and the Hands using its legacy API Gateway and the newer Bedrock AgentCore Runtime.

- The Stream Collapse: AWS API Gateway enforces a notorious 29-second integration timeout. It completely lacks native support for persistent Streamable HTTP or multiplexed SSE. Autonomous agents frequently spawn multi-step asynchronous processes that stream progress notifications back to their orchestrator. AWS severs these streams arbitrarily, forcing the Agent’s reasoning engine to hallucinate the result of a process it assumes survived.

- The 64 KB Command Chokehold: AWS Bedrock introduces a lethal constraint: a 64 KB limit on command payloads for the Agent-to-Agent (A2A) protocol. Deploying a comprehensive MCP server on AgentCore routinely triggers violent

ValidationExceptionerrors because modern schema manifests exceed the physical transport capabilities of the AWS router. - Data Blindness: Because the API Gateway maps HTTP calls to Lambda blindly, it cannot inspect the egress JSON stream. If your database leaks a password hash, the Lambda function serializes it, the AWS API Gateway streams it, and the Agent ingests it as context.

3. The Absolute Baseline: Market Comparison

| Architectural Role | AWS API Gateway / Bedrock | Cloudflare AI Gateway | Vinkius AI Gateway |

|---|---|---|---|

| Primary Classification | Legacy REST Proxy | Inference Gateway | MCP Execution Gateway |

| Core Function | HTTP-to-Lambda mapping | Prompt / Token routing | JSON-RPC V8 Sandboxing |

| MCP Transport Support | Poor (Breaks streaming) | Basic (Manual Workers setup) | Native Streamable HTTP & SSE |

| Max Integration Timeout | 29s (Fatal for Agents) | Provider Dependent | Managed Stream/Idle Timeout Limits |

| In-Flight Data Mutation | None (Transparent proxy) | Semantic Caching | Native .redactPII() interception |

| Execution Sandboxing | Lambda (High latency) | Cloudflare Worker (Manual) | Native Isolates (3-5ms restore) |

4. The Vinkius Edge: Purpose-Built Execution Infrastructure

If Cloudflare AI Gateway protects the LLM, our Vinkius AI Gateway protects your Enterprise.

We built Vinkius exclusively from the ground up to serve as the runtime engine for the 2026 Model Context Protocol standard. We do not proxy Inference tokens. We intercept the Streamable HTTP execution stream, hydrate a defense-grade environment, and enforce absolute control over the MCP tools your agents attempt to call.

4.1 V8 Isolate Sandboxing (Zero Attack Surface)

Every MCP server tool deployed to our gateway does not run in a standard Docker container or a raw Node.js script. It runs inside a mathematically sealed V8 JavaScript isolate. There is zero raw access to the global process object, no open fs filesystem handles, and no raw network bindings.

- The CPU Guillotine: Execution is hard-capped. Our custom Isolate monitors the CPU cycle count at the C++ level. If an executed MCP Tool enters an infinite sequence (e.g., a runaway regex or malicious payload), the execution is severed by a hardware guillotine at exactly 5 seconds.

- Dual-Stack SSRF Protection: If an agent calls a tool that fetches a URL, DNS is pre-resolved and IP-pinned across both the Node.js and internal PHP layers, making internal network port-scanning impossible.

4.2 Forensic Auditing (Mathematically Tamper-Proof)

If an AI Agent executes a tool that drops a database table, your CISO will ask: “Can you mathematically prove the Agent ordered this action?” AWS and Cloudflare can only offer basic text logs.

Every stream, connection, and tool call routed through our infrastructure is serialized, hash-chained via SHA-256, and cryptographically signed with Ed25519 session keys. We maintain a 3-tier PKI hierarchy rotated every 24 hours. The audit trail is streamed in real-time to Splunk HEC or Datadog via Redis Streams. You can fundamentally prove in a court of law exactly what the AI Agent did.

4.3 Stateful Hibernation in 3-5ms

Agents idle for long periods while the LLM generates tokens. In AWS Lambda, waiting for an LLM means paying for idle compute, or suffering 500ms+ cold starts when the Lambda drops.

Our Edge infrastructure implements V8 heap snapshot restoration. The exact millisecond the MCP tool’s internal execution pauses, the isolate is hibernated. Upon the next JSON-RPC tools/call traversing the Streamable HTTP pipe, the specific sandbox state—complete with memory variables, open SDK references, and finite state machine progress—is pulled from storage and restored to RAM in 3 to 5 milliseconds. You achieve serverless billing while maintaining the persistent session required by MCP.

4.4 In-Flight DLP (Egress Firewalls)

As established in our framework Vurb.ts, we operate the industry’s only real-time Egress Firewall.

When your backend database streams sensitive PII back to the agent, a standard gateway routes it directly to Anthropic or OpenAI as context. Our AI Gateway intercepts the payload, applies the schema-driven Presenter, and physically destroys the PII fields in RAM before they cross the outbound wire. The Agent’s context window receives [REDACTED]. Your own poorly configured tools cannot legally leak data to a third-party model.

5. Govern the Protocol, Or It Governs You

You cannot rely on Inference Gateways to parse the complex execution nuance of JSON-RPC intent over Streamable HTTP, nor can you rely on generic API proxies that shatter under long-running agentic streams.

The Two-Gateway Architecture is the only viable path forward. Use Cloudflare AI Gateway to cache and route your Inference tokens. But when that intelligence reaches out to touch your database, invoke our AI Gateway.

We intercept the execution traffic, execute the action in a V8 restrictor, strip PII before it crosses the outbound wire, and cryptographically sign the manifest via SHA-256. Stop treating your agents like standard REST clients. Protect your execution layer.

Your agents need tools. We make them safe.

Pick an MCP server from the catalog. Subscribe. Copy the URL. Paste it into Claude, Cursor, or any client. One URL — DLP, audit trail, and kill switch included.

V8 sandbox isolation · Semantic DLP · Cryptographic audit trail · Emergency kill switch